- cross-posted to:

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.world

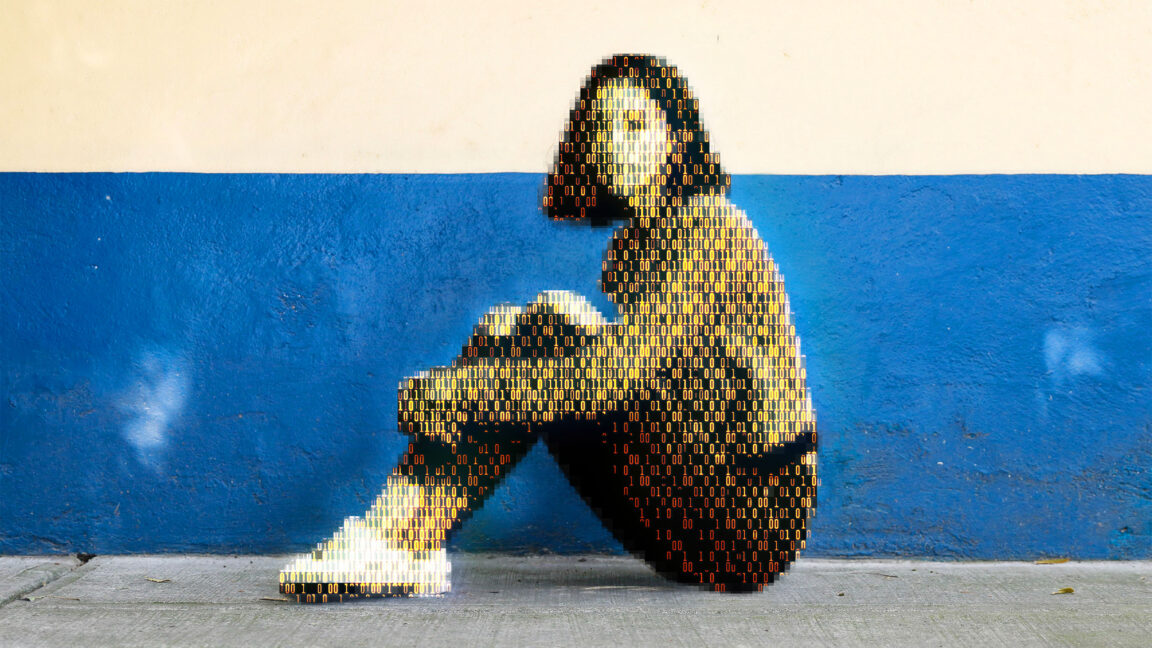

Today, a prominent child safety organization, Thorn, in partnership with a leading cloud-based AI solutions provider, Hive, announced the release of an AI model designed to flag unknown CSAM at upload. It’s the earliest AI technology striving to expose unreported CSAM at scale.

You can’t merge a generative model and a classification model. You can run then in series to get a bunch of false positives/hallucinations, but you can’t make it generate something from the other model.

When I said a “general purpose model that knows what children look like” I didn’t mean the classification model from the article. I meant a normal, general purpose image generation model. When I said “that knows what children look like” I mean part of its training set is on children, because it’s sort of trained a little on everything. When I said “pornographic model” I mean a model trained exclusively on NSFW content (and not including any CSAM, but that may be generous depending on how much care was out into the model’s creation).