I’ve tried Zerotier before and it doesn’t hold a candle to Tailscale.

- 1 Post

- 204 Comments

Tailscale

It’s WireGuard based and it’s completely free for 100 devices.

13·21 days ago

13·21 days agoPixelfed is the alternative we want

1·1 month ago

1·1 month agoI posted my sides across many comments. But the same argument applies to everyone saying the opposite.

2·1 month ago

2·1 month agoOk, now that’s awesome! I’m installing it now. Thanks!

2·1 month ago

2·1 month agoI’m using a Pixel 7 right now, and I love the camera. I’m not sure I’ll be happy if I lose all the camera features.

Thanks for replying

1·1 month ago

1·1 month agoGrapheneOS isn’t a complete solution, especially if you still use things like Facebook and Whatsapp. Although it is a massive plus to privacy.

Quick question. I’ve been hesitating with jumping to Graphene for a little while now. The two things that have held me back is losing access to Google Camera and Android Pay (or Google Pay, or Google Wallet, or Android Wallet. Whatever Google’s calling it these days).

The Google Wallet feature I think has taken care of itself. They pushed an update that requires you to re-authenticate after the initial tap for “security”. Which means half the time the transaction fails and the cashier has to redo the payment process. So I just gave up and have gone back to tapping with my cards directly for the past month.

So that just leaves the Google Camera. How’s the quality with Graphene?

2·1 month ago

2·1 month agoI’ve been hearing about them being wrong fairly frequently, especially on darker skinned people, for a long time now.

I can guarantee you haven’t. I’ve worked in the FR industry for a decade and I’m up to speed on all the news. There’s a story about a false arrest from FR at most once every 5 or 6 months.

You don’t see any reports from the millions upon millions of correct detections that happen every single day. You just see the one off failure cases that the cops completely mishandled.

I’m assuming that of apple because it’s been around for a few years longer than the current AI craze has been going on.

No it hasn’t. FR systems have been around a lot longer than Apple devices doing FR. The current AI craze is mostly centered around LLMs, object detection and FR systems have been evolving for more than 2 decades.

We’ve been doing facial recognition for decades now, with purpose built algorithms. It’s not mucb of leap to assume that’s what they’re using.

Then why would you assume companies doing FR longer than the recent “AI craze” would be doing it with “black boxes”?

I’m not doing a bunch of research to prove the point.

At least you proved my point.

2·1 month ago

2·1 month agopeople with totally different facial structures get identified as the same person all the time with the “AI” facial recognition

All the time, eh? Gonna need a citation on that. And I’m not talking about just one news article that pops up every six months. And nothing that links back to the UCLA’s 2018 misleading “report”.

I’m assuming Apple’s software is a purpose built algorithm that detects facial features and compares them, rather than the black box AI where you feed in data and it returns a result.

You assume a lot here. People have this conception that all FR systems are trained blackbox models. This is true for some systems, but not all.

The system I worked with, which ranked near the top of the NIST FRVT reports, did not use a trained AI algorithm for matching.

1·1 month ago

1·1 month agoI think from a purely technical point of view, you’re not going to get FaceID kind of accuracy on theft prevention systems. Primarily because FaceID uses IR array scanning within arm’s reach from the user, whereas theft prevention is usually scanned from much further away. The distance makes it much harder to get the fidelity of data required for an accurate reading.

This is true. The distance definitely makes a difference, but there are systems out there that get incredibly high accuracy even with surveillance footage.

1·1 month ago

1·1 month agoBut for everyone else who is just trying to live their life, this can be extremely invasive technology.

Now here’s where I drop what seems like a whataboutism.

You already have an incredibly invasive system tracking you. It’s the phone in your pocket.

There’s almost nothing a widespread FR system could do to a person’s privacy that isn’t already covered by phone tracking.

Edit: and including already existing CCTV systems that have existed for decades now. /edit

Even assuming a FR system is on every street corner, at best it knows where you are, when you’re there, and maybe who you interact with. That’s basically it. And that will be limited to just the company/org that operates that system.

Your cellphone tracks where are you, when you’re there, who’s with you, the sites you visit, your chats, when you’re at home/friends place, how long you’re there, can potentially listen to your conversations, activate your camera. On and on.

On the flip side a FR system can notify stores and places when a previous shoplifter or violent person arrives at the store (not trying to fear monger, just an example) and a cellphone wouldn’t be able to do that.

The boogyman that everyone sees with FR and privacy doesn’t exist.

Edit 2: as an example, there was an ad SDK from a number of years ago that when you had an app open that used that SDK it would listen to your microphone and pickup high pitched tones from TV ads to identify which ads were playing nearby or on your TV.

https://www.forbes.com/sites/thomasbrewster/2015/11/16/silverpush-ultrasonic-tracking/

1·1 month ago

1·1 month agoWe were cheaper on both hardware and software costs than just about anyone else, and we placed easily in the top 5 for performance and accuracy.

The main issue was the covid came around, and since we’re not a US company and the vast majority of interest was in the US we were dead in the water.

What I’ve learned through the years is that the best rarely costs the most. Most corporate/vendor software out there are chosen by just about every consideration aside from quality.

5·1 month ago

5·1 month agoBased on your comments I feel that you’re projecting the confidence in that system onto the broader topic of facial recognition in general; you’re looking at a good example and people here are (perhaps cynically) pointing at the worst ones. Can you offer any perspective from your career experience that might bridge the gap? Why shouldn’t we treat all facial recognition implementations as unacceptable if only the best – and presumably most expensive – ones are?

It’s a good question, and I don’t have the answer to it. But a good example I like to point at is the ACLU’s announcement of their test on Amazon’s Rekognition system.

They tested the system using the default value of 80% confidence, and their test resulted in 20% false identification. They then boldly claimed that FR systems are all flawed and no one should ever use them.

Amazon even responded saying that the ACLU’s test with the default values was irresponsible, and Amazon’s right. This was before such public backlash against FR, and the reasoning for a default of 80% confidence was the expectation that most people using it would do silly stuff like celebrity lookalikes. That being said, it was stupid to set the default to 80%, but that’s just hindsight speaking.

My point here is that, while FR tech isn’t perfect, the public perception is highly skewed. If there was a daily news report detailing the number of correct matches across all systems, these few showing a false match would seem ridiculous. The overwhelming vast majority of news reports on FR are about failure cases. No wonder most people think the tech is fundamentally broken.

A rhetorical question aside from that: is determining one’s identity an application where anything below the unachievable success rate of 100% is acceptable?

I think most systems in use today are fine in terms of accuracy. The consideration becomes “how is it being used?” That isn’t to say that improvements aren’t welcome, but in some cases it’s like trying to use the hook on the back of a hammer as a screw driver. I’m sure it can be made to work, but fundamentally it’s the wrong tool for the job.

FR in a payment system is just all wrong. It’s literally forcing the use of a tech where it shouldn’t be used. FR can be used for validation if increased security is needed, like accessing a bank account. But never as the sole means of authentication. You should still require a bank card + pin, then the system can do FR as a kind of 2FA. The trick here would be to first, use a good system, and then second, lower the threshold that borders on “fairly lenient”. That way you eliminate any false rejections while still maintaining an incredibly high level of security. In that case the chances of your bank card AND pin being stolen by someone who looks so much like you that it tricks FR is effectively impossible (but it can never be truly zero). And if that person is being targeted by a threat actor who can coordinate such things then they’d have the resources to just get around the cyber security of the bank from the comfort of anywhere in the world.

Security in every single circumstance is a trade-off with convenience. Always, and in every scenario.

FR works well with existing access control systems. Swipe your badge card, then it scans you to verify you’re the person identified by the badge.

FR also works well in surveillance, with the incredibly important addition of human-in-the-loop. For example, the system I worked on simply reported detections to a SoC (with all the general info about the detection including the live photo and the reference photo). Then the operator would have to look at the details and manually confirm or reject the detection. The system made no decisions, it simply presented the info to an authorized person.

This is the key portion that seems to be missing in all news reports about false arrests and whatnot. I’ve looked into all the FR related false arrests and from what I could determine none of those cases were handled properly. The detection results were simply taken as gospel truth and no critical thinking was applied. In some of those cases the detection photo and reference (database) photo looked nothing alike. It’s just the people operating those systems are either idiots or just don’t care. Both of those are policy issues entirely unrelated to the accuracy of the tech.

1·1 month ago

1·1 month agoSo you are saying yourself that your argument has nothing to do with what’s in the article?..

OP said “reliability standards with a lot of nines have to be met”. All I’m saying is that we’re already there.

2·1 month ago

2·1 month agoNp.

As someone else pointed out in another comment. I’ve been saying the x% accuracy number incorrectly. It’s just a colloquial way of conveying the accuracy. The truth is that no one in the industry uses “percent accuracy” and instead use FMR (false match rate) and FNMR (false non-match rate) as well as some other metrics.

11·1 month ago

11·1 month ago

Edit: the truth is that saying x% accuracy isn’t entirely correct, because the Numbers just don’t work that way. It’s just a way we convey the data to the average person. I can’t count the amount of times I’ve had asked “ok, but what doesn’t mean in terms of accuracy? What’s the accuracy percentage?”

And I understand what you’re saying now. Yes I did have the number written down incorrectly as a percentage. I’m on mobile this whole time doing a hundred other things. I added two extra digits.

1·1 month ago

1·1 month agoYou know what overfitting is, right?

As you reply to someone who spent a decade in the AI industry.

This has nothing to do with overfitting. Particularly because our matching algorithm isn’t trained on data.

The face detection portion is, but that’s simply finding the face in an image.

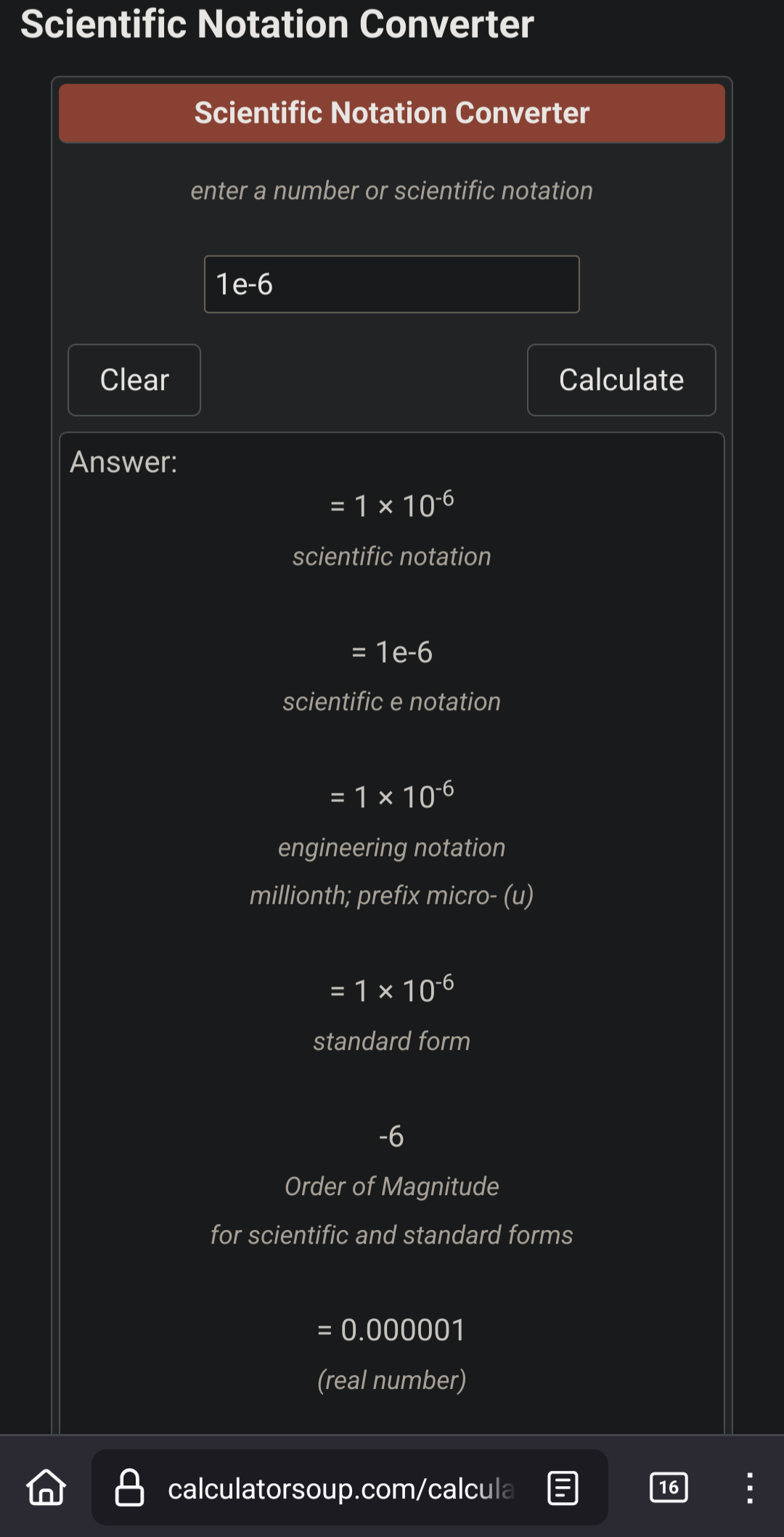

The system I worked with used a threshold value that equates to an FMR of 1e-07. And it wasn’t used in places like subways or city streets. The point I’m making is that in the few years of real world operation (before I left for another job) we didn’t see a single false detection. In fact, one of the facility owners asked us to lower the threshold temporarily to verify the system was actually working properly.

2·1 month ago

2·1 month agoYa, most upvotes and downvotes are entirely emotionally driven. I knew I would get downvoted for posting all this. It happens on every forum, Reddit post, and Lemmy post. But downvotes don’t make the info I share wrong.

If it doesn’t work when your internet is out, then it’s not local.