with the demise of ESXi, I am looking for alternatives. Currently I have PfSense virtualized on four physical NICs, a bunch of virtual ones, and it works great. Does Proxmox do this with anything like the ease of ESXi? Any other ideas?

Tossing in my vote for Proxmox. I’m running OPNsense as a VM without any issues. I did originally try pfSense, but didn’t like it for some reason (I genuinely can’t recall what it was).

Either way, Proxmox virtual networking has been relatively easy to learn.

pfSense, but didn’t like it for some reason

Probably the shitbirds at Netgate put you off it, understandably.

No problem using multiple physical and virtual ports for a pfsense in proxmox

Admittedly I have not dug too deeply into Proxmox but its learning curve appears kinda steep.

There’s multiple guides on virtualizing pfsense in proxmox, but the easiest is to simply pci passthrough the nics you wanna use.

I do recommend you leave a physical nic for proxmox itself to maintain LAN access to it if your pfsense is down.There could be driver issues doing this. I had a bad experience with Emulex NICs under OPNsense, Intel OTOH worked flawlessly. Switched back to virtual interfaces tho, as it works about as good as a physical NIC

its not too bad. i switched from esxi to proxmox about 2 years ago.

i run a virtualized opnsense with 2 nic’s passed through and another 2 virt, so it can be done

Hey! I have been using ESXi about three year now. I have two identical NIC I bought. One for WAN and one for LAN. I also discovered I had to use the onboard LAN port (3rd port!) just to be able to access the web control. (Is that normal?)

Anyway, I want to move to Proxmox, and then virtualize my OPNSense like I have on ESXi.

I get so confused by how the adapters should be. Ideally I would love to have the LAN connect to a (dumb) switch, and provide Wi-Fi. But one thing I never tried before is a VLAN to protect the LAN from the Wi-Fi traffic, but still allowing some systems to still work like streaming data from the wired PC on the LAN to the NVIDIA Shield Pro. But then keeping the Alexa/Echo system on a more restricted WiFi.

Can I do all this? I’m thinking I can, but. The hurdle of learning vlans and configuring the new Proxmox (which I’m pretty damn new to) is a daunting challenge.

I’m ready to try this though. I have a 4G wireless plus WiFi system to keep the other half happy while I tinker to get it all working.

Thoughts/Tips? Anyone?

All doable, you might need a managed or smart switch though

I have 4 bland at home plus untagged all through proxmox and a smart switch

- one for wan

- one for web facing servers

- one for iot

- one for guest wifi

- rest of lab is untagged

Notes about the switch. What is tagging? The purpose and where?

vlan tags, they make vlans work

And in about 2 years you’ll switch to LXD/Incus. :P

I’m currently off work with a broken shoulder, have you just given me a project?

Ahahaha that’s up to you. All best for your shoulder!

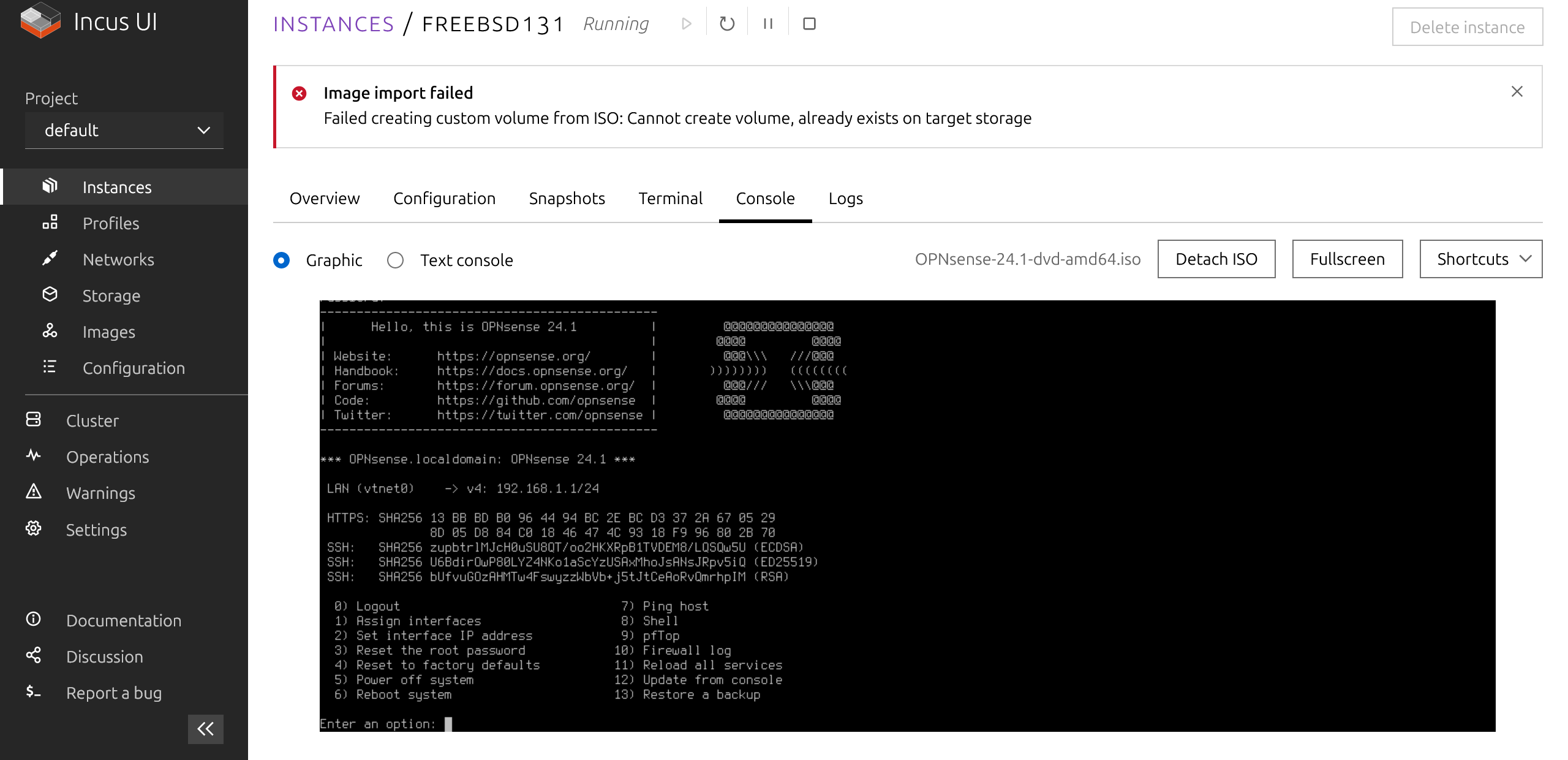

Incus looks cool. Have you virtualised a firewall on it? Is it as flexible as proxmox in terms of hardware passthrough options?

I find zero mentions online of opnsense on incus. 🤔

Yes it does run, but BSD-based VMs running on Linux have their details as usual. This might be what you’re looking for: https://discuss.linuxcontainers.org/t/run-freebsd-13-1-opnsense-22-7-pfsense-2-7-0-and-newer-under-lxd-vm/15799

Since you want to run a firewall/router you can ignore LXD’s networking configuration and use your opnsense to assign addresses and whatnot to your other containers. You can created whatever bridges / vlan-based interface on your base system and them assign them to profiles/containers/VMs. For eg. create a

cbr0network bridge usingsystemd-networkand then runlxc profile device add default eth0 nic nictype=bridged parent=cbr0 name=eth0this will usecbr0as the default bridge for all machines and LXD won’t provide any addressing or touch the network, it will just create aneth0interface on those machines attached to the bridge. Then your opnsense can be on the same bridge and do DHCP, routing etc. Obviously you can passthrough entire PCI devices to VMs and containers if required as well.When you’re searching around for help, instead of “Incus” you can search for “LXD” as it tend to give you better results. Not sure if you’re aware but LXD was the original project run by Canonical, recently it was forked into Incus (and maintained by the same people who created LXD at Canonical) to keep the project open under the Linux Containers initiative.

OPNsense running in the Incus live demo. Fun!

Enjoy your 30 min of Incus :P

With Incus only officially supported in Debian 13, and LXD on the way out, should I get going with LXD and migrate to Incus later? Or use the Zabbly repo and switch over to official Debian repos when they become available? What’s the recommended trajectory, would you say?

It depends on how fast you want updates. I’m sure you know how Debian works, so if you install LXD from Debian 12 repositories you’ll be on 5.0.2 LTS most likely for ever. If you install from Zabbly you’ll get the latest and greatest right now.

My companies’ machines are all running LXD from Debian repositories, except for two that run from Zabbly for testing and whatnot. At home I’m running from Debian repo. Migration from LXD 5.0.2 to a future version of Incus with Debian 13 won’t be a problem as Incus is just a fork and stgraber and other members of the Incus/LXC projects work very closely or also work in Debian.

Debian users will be fine one way or the other. I specifically asked stgraber about what’s going to happen in the future and this was his answer:

We’ve been working pretty closely to Debian on this. I expect we’ll keep allowing Debian users of LXD 5.0.2 to interact with the image server either until trixie is released with Incus available OR a backport of Incus is made available in bookworm-backports, whichever happens first.

I hope this helps you decide.

I have another question, if you don’t mind: I have a debian/incus+opnsense setup now, created bridges for my NICs with systemd-networkd and attached the bridges to the VM like you described. I have the host configured with DHCP on the LAN bridge and ideally (correct me if I’m wrong, please), I’d like the host to not touch the WAN bridge at all (other than creating it and hooking it up to the NIC).

Here’s the problem: if I don’t configure the bridge on the host with either dhcp or a static IP, the opnsense VM also doesn’t receive an IP on that interface. I have a

br0.netdevto set up the bridge, abr0.networkto connect the bridge to the NIC, and awan.networkto assign a static IP on br0, otherwise nothing works. (While I’m working on this, I have the WAN port connected to my old LAN, if it makes a difference.)My question is: Is my expectation wrong or my setup? Am I mistaken that the host shouldn’t be configured on the WAN interface? Can I solve this by passing the pci device to the VM, and what’s the best practice here?

Thank you for taking a look! 😊

Am I mistaken that the host shouldn’t be configured on the WAN interface? Can I solve this by passing the pci device to the VM, and what’s the best practice here?

Passing the PCI network card / device to the VM would make things more secure as the host won’t be configured / touching the network card exposed to the WAN. Nevertheless passing the card to the VM would make things less flexible and it isn’t required.

I think there’s something wrong with your setup. One of my machines has a

br0and a setup like yours.10-enp5s0.networkis the physical “WAN” interface:root@host10:/etc/systemd/network# cat 10-enp5s0.network [Match] Name=enp5s0 [Network] Bridge=br0 # -> note that we're just saying that enp5s0 belongs to the bridge, no IPs are assigned here.root@host10:/etc/systemd/network# cat 11-br0.netdev [NetDev] Name=br0 Kind=bridgeroot@host10:/etc/systemd/network# cat 11-br0.network [Match] Name=br0 [Network] DHCP=ipv4 # -> In my case I'm also requesting an IP for my host but this isn't required. If I set it to "no" it will also work.Now, I have a profile for “bridged” containers:

root@host10:/etc/systemd/network# lxc profile show bridged config: (...) description: Bridged Networking Profile devices: eth0: name: eth0 nictype: bridged parent: br0 type: nic (...)And one of my VMs with this profile:

root@host10:/etc/systemd/network# lxc config show havm architecture: x86_64 config: image.description: HAVM image.os: Debian (...) profiles: - bridged (...)Inside the VM the network is configured like this:

root@havm:~# cat /etc/systemd/network/10-eth0.network [Match] Name=eth0 [Link] RequiredForOnline=yes [Network] DHCP=ipv4Can you check if your config is done like this? If so it should work.

Very informative, thank you.

I am generally very comfortable with Linux, but somehow this seems intimidating.

Although I guess I’m not using proxmox for anything other than managing VMs, network bridges and backups. Well, and for the feeling of using something that was set up by people who know what they’re doing and not hacked together by me until it worked…

I guess I’m not using proxmox for anything other than managing VMs, network bridges and backups.

And LXD/Incus can do that as well for you. Install it an by running

incus initit will ask you a few questions and get an automated setup with networking, storage etc. all running and ready for you to create VMs/Containers.What I was saying is that you can also ignore the default / automated setup and install things manually if you’ve other requirements.

It’s not too different from ESXi, things are just named differently in the webUI.

Proxmox is quite simple. As a former VCP, I find Proxmox more intuitive to use.

If you need specific help with Proxmox and/or ZFS, you might also look at posting on https://www.practicalzfs.com

And +1 for using OPNsense

From my understanding is that Proxmox is one of the more easy platforms to learn. I must say iI never used it personally.

Proxmox is great

Acronyms, initialisms, abbreviations, contractions, and other phrases which expand to something larger, that I’ve seen in this thread:

Fewer Letters More Letters ESXi VMWare virtual machine hypervisor HTTP Hypertext Transfer Protocol, the Web IP Internet Protocol LTS Long Term Support software version LXC Linux Containers SSH Secure Shell for remote terminal access SSO Single Sign-On VPN Virtual Private Network ZFS Solaris/Linux filesystem focusing on data integrity nginx Popular HTTP server

10 acronyms in this thread; the most compressed thread commented on today has 28 acronyms.

[Thread #508 for this sub, first seen 13th Feb 2024, 06:05] [FAQ] [Full list] [Contact] [Source code]

Proxmox, TrueNAS, Debian with cockpit etc. really any type 1 hyperviser work’s.

Nothing can beat bhyve for PFSence.

We’ve been running KVM on CentOS/Rocky hosts for our VM platforms; seems to work fine for our needs.

I’m not sure how ESXi would differ as I’ve never used it, but may be an option if you want to roll your own vs proxmox.

I see a lot of love for proxmox in this thread.

Word of warning from my experience, sometimes PfSense seems to get confused with virtual interfaces. It works flawlessly once it’s up and running, but every time I reboot I have to assign interfaces. It will hang until I do so and will not completely come back online until I manually intervene.

As Another Proxmox user - I’ve been doing well with it. I use these scripts for the LXC’s which has been fantastic:

https://tteck.github.io/Proxmox/

I also can log into it from the web as it’s secured by Authentik, SSO OIDC login when Away from home and need to manage it. Rare! But the option is there! :)

I ran it on Hyper-V for many years. Still running OPNsense that way. It manages 4 VLANS, RDNSBL, a metric ass ton of firewall rules, and several VPN clients and gateways, with just 2GB of ram and 4 virtual procs. It works and doesn’t even breathe hard.

deleted by creator