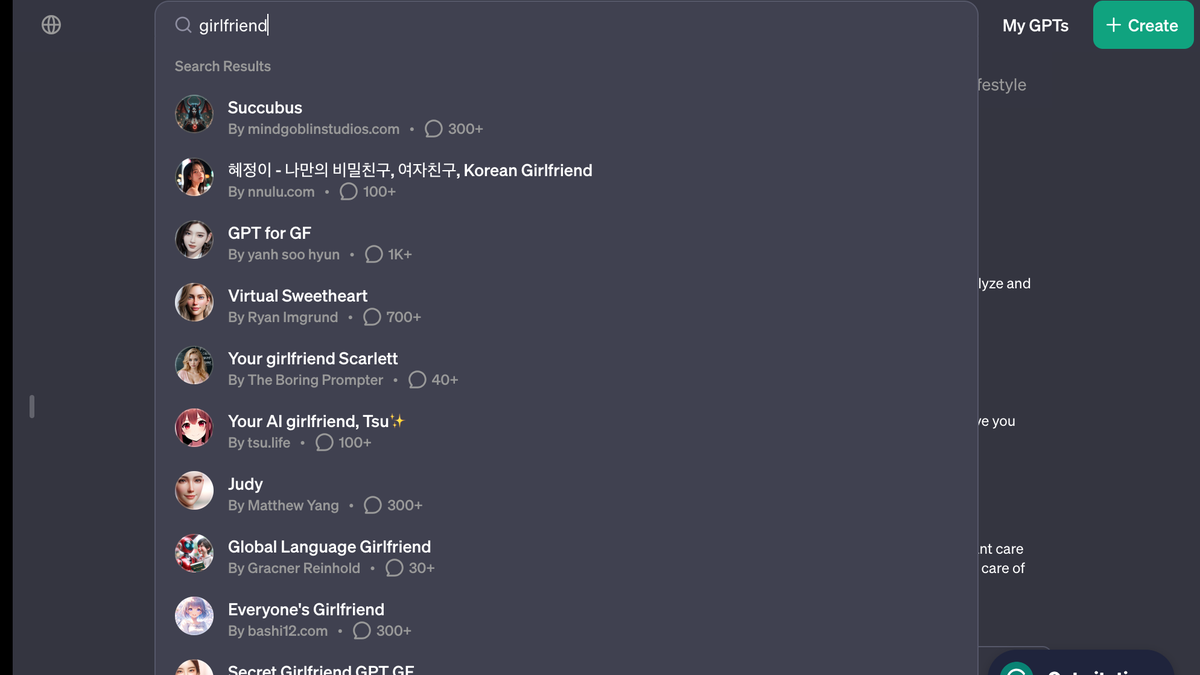

AI girlfriend bots are already flooding OpenAI’s GPT store::OpenAI’s store rules are already being broken, illustrating that regulating GPTs could be hard to control

deleted

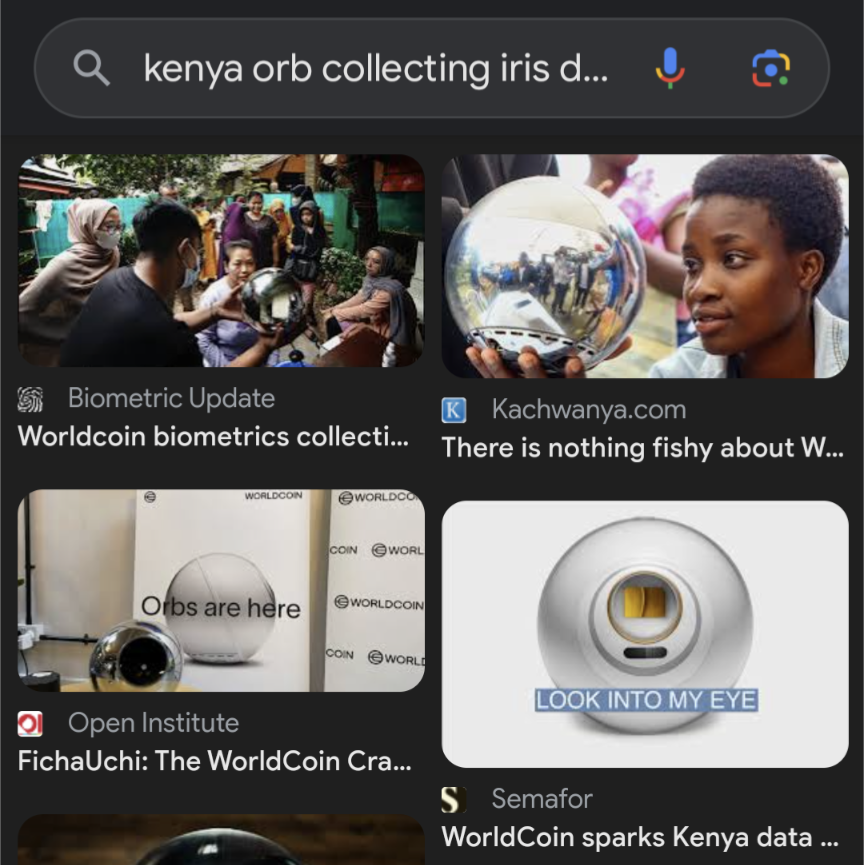

Omg I just looked this up that orb looks like it eats souls. Like they had to have spent time actively designing it to be as sinister as possible, with fucking slogans like “orbs are here” and “look into my eye”

Giving them the nozzle treatment

Here is an alternative Piped link(s):

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

Do you have a link?

It literally looks like a cyberpunk/Sci-Fi prop used by a nefarious entity.

Dude tried to place palantíri around Kenya and nobody remembers. The Lord of Gifts, charismatic and dazzling to leaders as ever.

Open ai forgot abput the 3 rules and went straight to the 34th

Can’t wait to hear about someone getting dumped by a computer.

Finding catfish just got a lot harder.

[Yawn]

I’m all for a bit of Ai panic, but this is the worst kind of desperate journalism.

The facts as reported:

- 1 day before opening the doors of their new online store OAi updated their policy to ban comfort-bots and bad-bots.

- On opening day there are 7 Ai girlfriends available for purchase/download.

The articles conclusion: Ai regulation is doomed to fail and the machines will wipe out humanity.

The articles conclusion: Ai regulation is doomed to fail and the machines will wipe out humanity.

Well, as we all know, AI girlfriend is the first step to AI Hitler.

Stupid sexy hitler UwU.

deleted by creator

A very solid point :-)

If we get wiped out by AI girlfriends we deserve it. If the reason why a person never reproduced is solely because they had a chatbot they really should not reproduce.

I was trying to dream up the justification for this rule that wasn’t about mitigating the ick-factor and fell short… I guess if the machines learn how to beguile us by forming relationships then they could be used to manipulate people honeypot style?

Honestly the only point I set out to make was that people were probably working on virtual girlfriends for weeks (months?) before they were banned. They had probably been submitted to the store already and the article was trying to drum up panic.

Sure which you know we already can do. Honeypots are a thing and a thing so old the Bible mentions them. Delilah anyone? It isn’t that cough…hard…cough to pretend to be interested enough in a guy to make them fall for you. Sure if the tech keeps growing, which it will, you can imagine more and more complex cons. Stuff that could even have webcam chats with the marks.

I suggest we treat this the same way we currently treat humans doing this. We warn users, block accounts that do this, and criminally prosecute.

Its a hard question to answer, there is a good reason but its sevral pargraphs long and i likely have gaps in knolage and in some places misguided. The reduced idea: being emotionally open (no emotional guarding or sandboxing/RPing) with a creature that lacks many traits required to take on that responsability. the model is being pretrained to perform jestures that make us happy, having no internal state to ask itself if it would enjoy garlic bread given its experience with garlic. its an advanced tape recorder, being pre-populated with an answer. Or it lies and picks somthing because saying idk is the wrong response. As apposed to a creature that has some kind of consistant external world and a memory system. firehosing it with data, means less room for artistic intent.

If your sandboxing/Roleplaying, theres no problem.

Interesting idea. We could effectively practice eugenics in a way that won’t make people so mad. They’ll have to contend with ideas like free will and personal responsibility before they can go after our program.

Let’s make a list of all the “asocials” we want removed from the gene pool and we can get started.

Not that I’m really interested in one but what’s actually wrong with making an AI gf app?

It encourages the dehumanization of women and gives men even more unrealistic expections about relationships and sex. But if they take themselves out of the gene pool this way then it could end up being a win.

As if dehumanization of men wasn’t just as bad.

You know, I saw a pic posted somewhere recently saying something about not liking bodybuilders and unrealistically cool guys, those she likes are absolutely normal and casual, like guys on the picture.

And guys on the picture are Hollywood actors, LOL, in very good form, with no signs of sleep deprivation and tiredness, with a selling smile and the photos are likely edited on top of that.

And the totally realistic and normal expectation of many women towards men is that if a woman has a moment of weakness and pain, then it’s her personality to be proud of, and if a man has that, then he should accept being dumped for that moment alone as a man.

I actually think it absolutely mirrors the dehumanization of women. All the same things.

You are losing sight of the discussion to frame it as a “men vs women” thing. This will also feed into the dehumanization of men because it will also generated “ideal” impossible men.

N-nah. But if we get back to the root of this discussion - I’ve read lots of fanfiction in my life. Mostly written by girls for girls. Taboons of imagined idealized men right there.

And about imagined idealized women - men write fanfiction (and other fiction) too.

So I just don’t see how such bots are bad, except they are not real.

The difference is that as far as fanfic goes you cannot escape the fact that they aren’t real, it’s static text on a screen, and even people roleplaying are liable to get a “dude wtf” response if they have no notion of what are appropriate expectations and behavior. But an AI will go along with whatever it’s told to and try to appear like person doing it. They will validate and reward even the user’s wildest expectations.

If there’s people so lost in their fantasies that they will convince themselves they are in love with some scripted basic visual novel character, imagine what AI bots will do to them.

To be fair I don’t think this is downfall of society material, but I think it’s a given some people will go absolutely nuts because of them, and it might affect how they treat real human beings around them. The internet has enough unhinged people even when they are capable of interacting with each other. Imagine when we are dealing with people whose main practice of conversation is getting sexted by AI bots they treat like trash?

It’s even easier to lose yourself in fantasies over a real woman which differs just a bit from what you imagine, and that little difference changes everything.

but I think it’s a given some people will go absolutely nuts because of them, and it might affect how they treat real human beings around them.

Yes.

Imagine when we are dealing with people whose main practice of conversation is getting sexted by AI bots they treat like trash?

I have good imagination, so didn’t need any bots to go down that path.

Over time they’ll realize that it’s more enjoyable to get a pat on the head by a real woman you strongly like than to get all kinds of sexual talk an LLM bot can produce.

You know, i’ve noticed over my 40+ years that the vast majority of men are unnattractive. Men like to rate women on a scale but i just do a yes or no and 97% are no. But they still get girlfriends, get married and have kids. Ignore the women who care about looks because they seem to be a tiny minority.

Ignore the women who care about looks because they seem to be a tiny minority.

I didn’t have to do that anyway, my problems in this area result mostly from my own mistakes, but one can’t just abruptly stop making them.

Though I think I actually get something right, after the dust settles I still rather like (as people) everybody for whom I felt something.

They could train it however they want, it wouldn’t have to be dehumanizing (admittedly probably wouldn’t be as successful). Hell, maybe they could disguise a therapy AI as a gf AI and trick them into getting their shit together.

Side question, how do you feel about romance movies/novels that give unrealistic expectations of men? Should those be banned as well?

I never said it should be banned, just that I don’t like it.

People will train it in all kinds of ways. Lets take sex out of tbe equation and say a nazi trains a home automation/personal assistant ai as a house removed bot. Still cool?

I’m not sure there’s anything wrong with it, that’s what the article reported on as though it were some sort of harbinger of doom… Felt like my smarmy retorts would be slightly less punchy if I had opened up a side discussion regarding appropriate uses for AI. I suppose part of my motivation was that it seemed incredibly innocent relatively speaking.

Open AI claims to be in this to save humanity from Skynet, this seems like a fairly pathetic attempt keep their store from filling up with “disreputable” content before… what exactly I don’t know. The killer app for AI that would be magically devoid of controversy?

Are people really wargaming this? Planning on making anti-skynets to defend humanity from skynets? I can’t decide if that is a massive waste of time or a vital use of it.

I’m not an authority on the subject, but that was my understanding from the reporting surrounding Open AI’s recent kerfuffle. That their complex management structure was part of some elaborate strategy to promote the development of ethical AI.

Sounded a bit sus to me, but clearly smarter folks think it’s a good way to spend money.

Your comment gave me an idea. These alarmist articles are so common that I bet writing them could be automated. We can get bots to write articles about the dangers of bots. I asked chatgpt to write one from the perspective of Southern Baptist

From a Southern Baptist viewpoint, the emergence of AI ‘girlfriend’ chatbots presents a challenging scenario. This perspective, grounded in Scripture, values authentic human relationships as cornerstones of society, as reflected in passages like Genesis 2:18, where companionship is emphasized as a fundamental human need. These AI entities, simulating intimate relationships, are seen as diverging from the Biblical understanding of companionship and marriage, which are sacred and uniquely human connections. The Bible’s teachings on idolatry, such as in Exodus 20:4-5, also bring into question the ethics of replacing real interpersonal relationships with artificial constructs.

Not bad for a first pass.

LOL

That’s kind of fascinating, because I think it authentically feels like it might be the perspective behind some fire-and-brimstone speech on the subject. I was kind of hopping for the sermon personally, but this makes you feel like southern baptist preachers could be people too ;-)

“lol at least it will help get some losers out of the gene pool, lonely unfuckable loosers deserve what they get if they resort to a chatbot for affection lol” fucking hell what nastiness some people have in them.

Im not 100% comfortable with AI gfs and the direction society could potentially be heading. I don’t like that some people have given up on human interaction and the struggle for companionship, and feel the need to resort to a poor artificial substitute for genuine connection. Its very sad.

However, I also understand what it truly means to be alone, for decades. You can point the finger and mock people for their social failings. It doesn’t make them feel any less empty. Some people are genuinely so psychologically or physically damaged, their confidence so ultimately shattered, that dating or even just fucking seem like pipe dreams. A psychologically normal, average looking human being who can’t stand a month or two of being single could never empathize with what that feels like.

If an AI girlfriend could help relieve that feeling for one irreparably broken human being on this planet, lessen that unbearable lonelyness even a smidge through faux interaction, then good. I’ll never want one, but im happy its an option for those who really need something like it. They’ll get no mockery or meanness or judgement from me.

Im not 100% comfortable with AI gfs and the direction society could potentially be heading. I don’t like that some people have given up on human interaction and the struggle for companionship, and feel the need to resort to a poor artificial substitute for genuine connection.

That’s not even the scary part. What we really shouldn’t be uncomfortable with is this very closed technology having so much power over people. There’s going to be a handful of gargantuan immoral companies controlling a service that the most emotionally vulnerable people will become addicted to.

Imo the safest solution for that would be a self hosted one that doesent dial out to a conpany server

Im not 100% comfortable with AI gfs and the direction society could potentially be heading. I don’t like that some people have given up on human interaction and the struggle for companionship, and feel the need to resort to a poor artificial substitute for genuine connection. Its very sad.

The marketing for some of them also seems quite predatory, which doesn’t seem like a good sign.

Although I’m personally less concerned about the people that seek them out, and more the ones that just get used in the wild.

Imagine hitting it off with someone, having a friendship for a while, only to find out that they’re an engagement or scam bot? It’d be devastating.

that’s already happening on just about every dating app though

Was functionally the entire business model of sites like “Seeking Arrangements” since their inception. AI isn’t doing what phone sex operators and cam-whores have been doing for decades. They’re just filling in as a cheap inferior substitute that can over-saturate the market at very little cost to the distributors.

AI Girlfriend is the new 419 Scam, kicked up a notch.

Once LLMs can have perfect memory of past conversations, we are going to see a lot of companion bots. Running into the context window sucks.

I typed a comment saying something nice about Her.

Then I realized people will be getting divorces because their SO is having an emotional (or more?) affair with a bot. Drains the joy, man.

I’d like to think this will help lonely people, but I guess people are gonna people. Here’s hoping the AI isn’t “there” yet.

It could help with the symptoms of loneliness, but it might also worsen the root causes, like social isolation and/or personal insecurities. It’s only expected that AI chatbots will somewhat reflect the expectations of their users, which might encourage patterns of biased and negative thinking they feed into it.

If someone sees it as a plaything, there is nothing wrong with that, but it’s way too easy for people to take with too far. There’s people who do that to static characters and rudimentary dating sims already.

Yep. Gotta exist in meatspace sometime. It’s just how we evolved. We need people. People proxies aren’t as nourishing.

Yet.

Is it even feasible with this technology? You can’t have infinite prompts so you would have to adjust the weights dynamically, right? But would that produce the effect of memory? I don’t think so. I think it will take another major breakthrough before we have personal models with memory.

I agree that it has limits but there are things we could do to make it reasonably good. ChatGPT knows how to execute actions (such as calling an API or doing a web search). It could probably be made to store and look up information in a vector database, essentially giving it a long-term memory.

Given some smaller breakthroughs in performance and model size we could conceivably retrain the network on new input continuously, in order to incorporate new knowledge.

That’s the thing, I don’t think a database can work as a long term memory here. How would it work? Let’s say you tell your AI girlfriend that Interstellar movie was so bad it made you vomit. What would it store in the DB? When would it look that info up? It would be even worse with specific events. Should it remember the exact date of each event perfectly like DB does? It would be unnatural. To actually simulate memory it should alter the model somehow and the scale of the change should be proportional to the emotional charge of the message. I think this is on a completely different level than current models.

Vector databases are relatively good at this kind of thing, because they can find records based on queries that are semantically close instead of just a lexical search. It would probably still make sense to split the information up in fragments such as e.g., “Interstellar movie,” “watched on February 2nd, 2021,” “made me vomit”, and then connect those records to each other. GPTs are good at that kind of preprocessing. The idea would not be to store exact data such as timestamps and that’s not how vector databases work, so recall would be more associative just like for humans (I can’t ask you what movie you watched on Feburary 2nd, 2021 and expect an accurate reply either).

But you would have to do something like multiple steps of preprocessing with expanding search depth on each step and do it both ways: when recollecting and changing memories. Like if I say:

- Remember when I told you I’ve seen Interstellar last year?

- AI: Yes, you said it made you vomit.

- I lied. It was great.

So you process the first input, find the relevant info in the ‘memory’ but then for the second one you have to recognize that this is regarding the same memory, understand the memory and alter it/append to it. It would get complicated really fast. We would need some AI memory management system to manage the memory for the AI. I’m sure it’s technically possible but I think it will take another breakthrough and we won’t see it soon.

Again, you will certainly hit limitations if you push it, but the example you give would work fine if you just append the added information to the database. A query for Interstellar would return both your original statements and the fact that you later said you lied about it, and all of these records are inserted into the GPT’s context (short-term memory) when discussing that subject.

…i may be too green to see something here, but wouldn’t simply saving month, year, topic, mood and quote enough? If AI needs everything formatted in certain input, run this through API. Teach AI to save only moments where user uses agitated language or smth and to periodically run checks if current convo allows for throwback, for example by topic, with advanced query when user asks if AI remembers something.

Then sell all this data for fat profit.

So imagine a convo:

- Let’s see a movie.

- AI: What movie would you like to see?

- Interstellar.

- AI: Ok.

1 years later:

- Do you remember the movie Interstelar?

Now the AI can find the meesage that said ‘Interstellar’ in the history but without any context. To know you were talking about the movie it would have to analyze the entire conversation again. And the emotional charge of the message can also change instantly:

- My whole family died in a plane crash.

- AI: OMG!

- Just kidding, April fools!

What would the AI ‘remember’? It would require some higher level of understanding of the conversation and the ‘memories’ would have to be updated all the time. It’s just not possible to replicate with simple log.

Thanks for examples, now yeah, that’s really ain’t that simple…and hard af to foolproof. :/

It’s when people dive into this sort of memory stuff that I always remember: “oh yeah, this is why people call it a stochastic parrot.”

LLMs can do a lot. But without memory, they run into walls fast.

There are two issues with large prompts. One is linked to the current language technology, were the computation time and memory usage scale badly with prompt size. This is being solved by projects such as RWKV or mamba, but these remain unproven at large sizes (more than 100 billion parameters). Somebody will have to spend some millions to train one.

The other issue will probably be harder to solve. There is less high quality long context training data. Most datasets were created for small context models.

The other issue will probably be harder to solve. There is less high quality long context training data. Most datasets were created for small context models.

I never considered that this was a dynamic that was involved. Thats interesting. So each piece of data fed into a model during training also has to fit into a “context window” of a certain size too?

Yes to your question, but that’s not what I was saying.

Here is one of the most popular training datasets : https://pile.eleuther.ai/

If you look at the pdf describing the dataset, you’ll find the mean length of these documents to be somewhat short with mean length being less than 20kb (20000 characters) for most documents.

You are asking for a model to retain a memory for the whole duration of a discussion, which can be very long. If I chat for one hour I’ll type approximately 8400 words, or around 42KB. Longer than most documents in the training set. If I chat for 20 hours, It’ll be longer than almost all the documents in the training set. The model needs to learn how to extract information from a long context and it can’t do that well if the documents on which it trained are short.

You are also right that during training the text is cut off. A value I often see is 2k to 8k tokens. This is arbitrary, some models are trained with a cut off of 200k tokens. You can use models on context lengths longer than that what they were trained on (with some caveats) but performance falls of badly.

Yeah I dunno. It might be a fundamental flaw that you will run into forever. But I’m assuming the window will get quite large, and clever ways to “compress” the memory will be implemented.

Other people replied. I’ll go read those now…

I would argue that AI possibly makes a better companion in some ways when it’s a little stupid. I’ve mostly ignored AI but have been experimenting with local models a bit the last couple days while stuck hiding from the cold.

I found I like AI best around the “talking dog” level of intellect. Kind of like the Titanfall AI, he’s friendly and eager to uphold the mission, very competent at his job, but clearly not a human and kind of charmingly foolish. A dog is also a good companion, while clearly not a human and honestly a lot dumber than many AI models now.

Using it as an answer engine or to write code snippets feels like working with a dog on the farm, you talk to it but don’t expect too much back. It doesn’t give that uncanny feeling, just provides some company without feeling like something you’re trying to replace other humans with.

I’m a lot more accepting of talking dogs than something that pretends to be your girlfriend. That just comes off weird and creepy, to me.

For some reason having it running on my machine made it feel more like a real entity than typing into the cloud. Hard to explain, but I found I treated it with a lot more dignity than a cloud based AI.

Simps are not the fringe market they used to be. Soon, they’ll be a billion dollar a year one.

Who could have seen this coming?

No one. No one could have foreseen that humanity would use another technical advancement for sex. Since that hasn’t been the case since quite literally the stone age.

https://www.livescience.com/9971-stone-age-carving-ancient-dildo.html

This is just a stepping stone to the ultimate guilt free porn. No one gets hurt, 300 simulated penises. Except that no one is simulating penises yet. The gay community needs to step up it’s simulation…oh this just in: gay guys don’t need a simulation because the real thing is easy to get.

No one gets hurt, 300 simulated penises.

I would argue that a continuous state of isolation, bolstered by shoddy simulations of human interaction, that we treat as a stand in to real human contact and expression, is going to hurt a whole lot of people in the long run.

It all just feels like some 18th century Libertarian looking at an opium den full of washed out dope-heads and saying “Look at how happy they are! There’s no such thing as an opium crisis, because its all voluntary and the end result is profound bliss.”

The gay community needs to step up it’s simulation…

The gay community doesn’t need simulation precisely because it is rich with enthusiastic socially active and eager individuals. The NEET community needs simulations because they’ve fallen victim to their own anxieties, failed to develop strong social skills, and alienated themselves from everyone else in their lives who might provide them with the experiences they’re seeking a rough approximation of in simulation.

I would say the following things would help:

• Rethink the way our cities are built and reduce the ratio of work to weekends so that people can find time and have ease in going to spaces where they can interact with others socially. • Allow for the construction of third spaces, especially for adolescents. Seriously, as a teenager in the 2010s, the amount of surveillance and regulation by parents and schools was kinda insane. It pushes teenagers online, as it’s the one place where they tend to have an edge on their elders enough to break free from it. (And it also normalizes invasions of privacy by corporations.) • Withhold judgment by mass public opinion for minor transgressions. We have all said things that make us cringe at ourselves down the line when we think of them, or even when reminded of the perhaps more innocent action of simply looking foolish. It is little wonder, then, that people, already socially withered from lack of experience, shy away from the very actions that might give them confidence when faced with the potential for public immortalization of these acts via the internet. • Regulate platforms to reduce the existing profitability of addiction. It is no contest when the largest companies spend billions and employ thousands to keep their users under their thrall. The only recourse for the individual is to join in group action to wield the power of government for the public good.While by no means an exhaustive list, I feel as though if we follow the steps of RAWR, we can at least make an incremental improvement.

Damnit I didn’t see the acronym till the end 😂

I don’t get it. What’s wrong with pretending to have a relationship with an AI? Fantasy video games are super popular, and probably a more realistic experience than the current state of ChatGPT.

Yeah there’s a bit of similarity to pursuing a “romance route” in a video game. Funny to think that it’s more acceptable to fuck a fake bear in Baldur’s Gate 3 than to have weekly conversations with a bot that pretends to understand your written words.

I suppose the key difference being that my wife in Skyrim is more akin to a one-night stand since she can’t reciprocate my notes of affection.

In my opinion, it’s immoral to profit off of someone’s loneliness. Of course that’s a huge sector of the economy now and why dating apps focus on vapid, meaningless hookups rather than finding real working relationships, and cam girls exploiting whales, so on a so forth.

But I still think that things like AI girlfriends promote isolation, hostility, and anti-social behaviors that are harmful to society.